9 Agentic Patterns, Simply Explained

#140: The design decisions behind modern AI systems—how each design pattern works, where it breaks, and when to use it.

Share this post & I'll send you some rewards for the referrals.

When you build software with LLMs1, you need to decide how much control to give the model.

Does your code run every step, or does the model figure out the steps on its own?

Agentic patterns are design patterns for making that decision. They’re the same architecture choices you already make in any system: who controls what happens next, what happens on failure, and how data moves between components.

The difference is that some of those components are now language models.

This newsletter covers nine of those patterns…

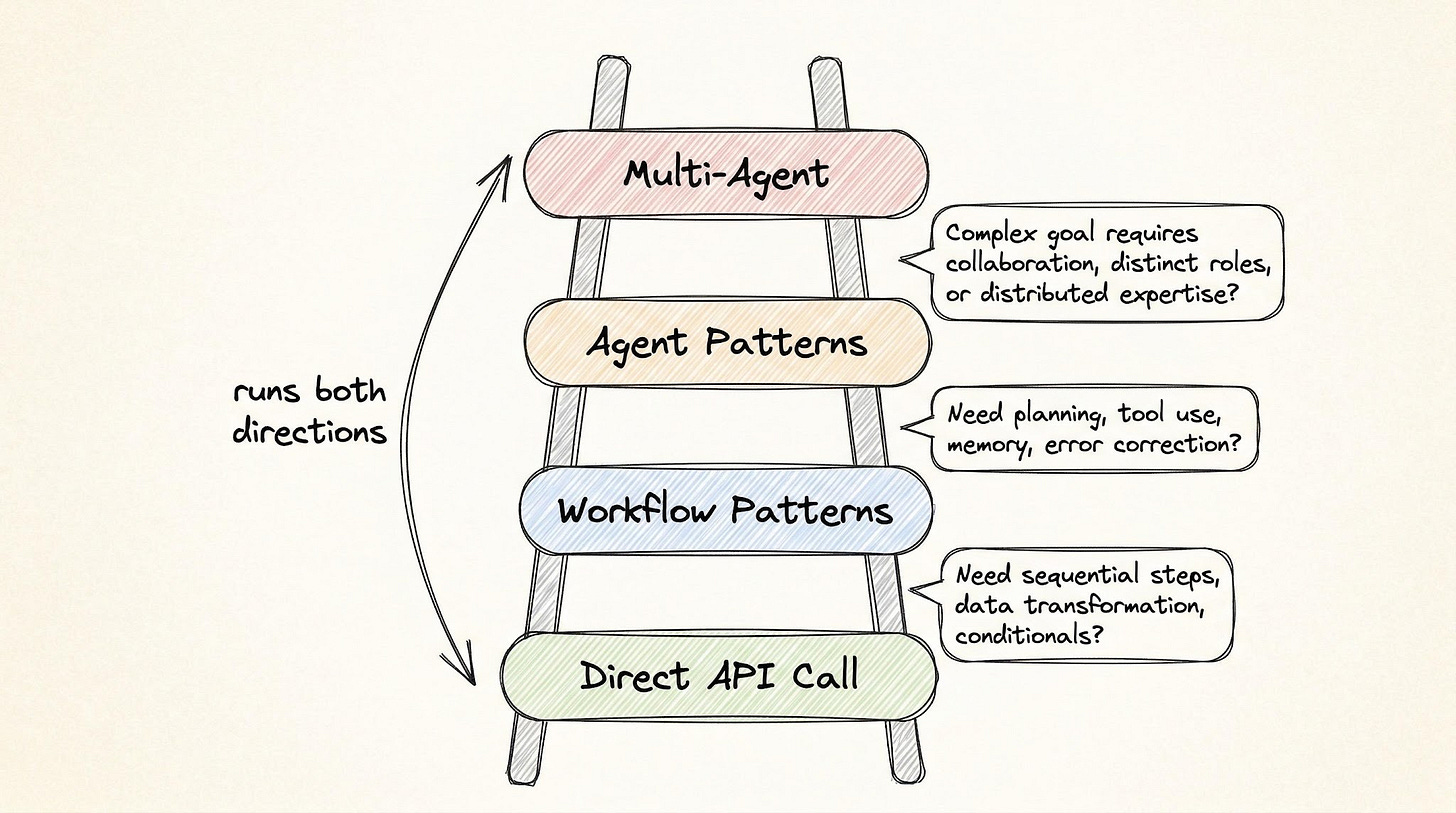

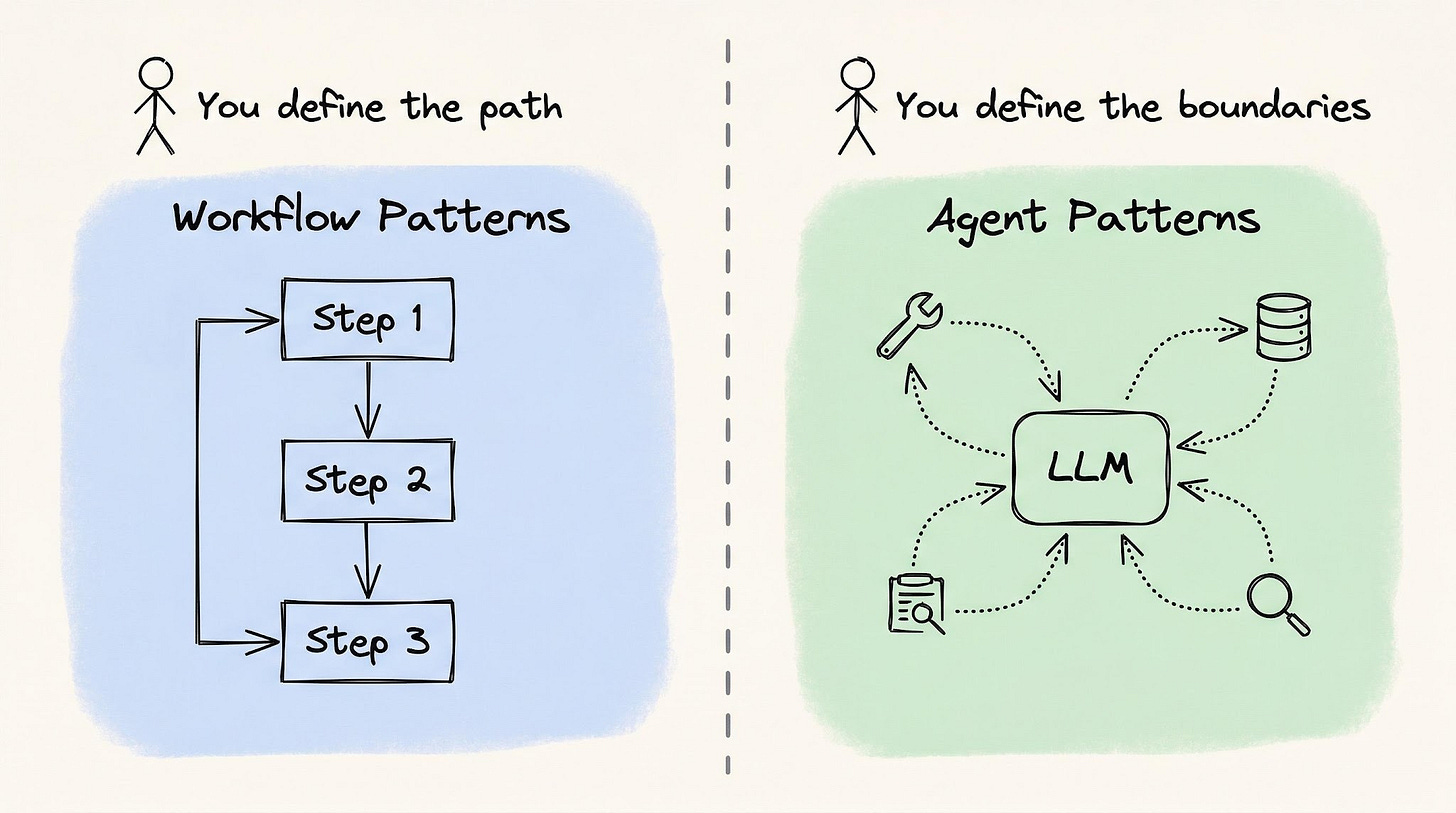

On one end, workflow patterns where your code controls every step. On the other hand, agent patterns where the Large Language Model (LLM) decides what to do next.

That boundary is a decision about how much control to hand to the model: at some point, you stop telling the model what to do and start letting it figure that out.

The first question isn’t which pattern to use. It’s whether you need one at all.

Let’s start there...

Find out why enterprise leaders are doubling down on data foundations for AI (Partner)

AI is moving fast, but your data foundation isn’t keeping up?

That’s exactly why leaders from JPMorgan, Mercedes-Benz, Siemens, and Roche contributed to this AWS book on agentic analytics.

Here’s what you get:

Real strategies from enterprise leaders: Learn how top companies are building data foundations for AI systems that actually scale.

Practical frameworks you can apply: From data strategy to data products, ML, and agentic AI.

Perspectives from 15+ enterprise leaders: Each chapter brings a unique view from senior leaders across industries.

Complex topics broken down clearly: Understand what matters without getting lost in theory.

An AWS book designed to help you build data foundations for intelligent agents that scale.

Download it now and learn how to build systems ready for intelligent agents.

(Thanks to AWS for partnering on this post.)

Inside this newsletter, you’ll get:

The shift from prompts to systems. Why simple LLM calls break down, and how workflows and agents introduce structured decision-making.

A practical escalation ladder. When to stay simple, when to use workflows, and when agents are actually justified.

Workflow patterns. chaining, routing, parallelization, and orchestrator-workers, with clear tradeoffs in cost, latency, and failure modes.

Agent patterns. Reflection, tool use, ReAct, and planning, focusing on how the model makes decisions and where things go wrong.

Evaluator-optimizer loop. How to add a reliability layer with evaluation, iteration limits, and cost control.

Real-world case study. Combining multiple patterns in an AI code review pipeline, showing how to choose the simplest architecture that works.

Not everything needs an agent

Most tasks don’t need an agent2…

Most tasks don’t even need a workflow…The default should be the simplest setup that works, and you escalate only when that setup breaks.

Start with a direct API call to an LLM. You can do more with this than most people think:

Summarization

Classification

Extraction

Rewriting

Translation

Code generation with clear specs

Most people skip this level too quickly!

Workflow patterns are the next step up.

Escalate when a task has many steps, and when focused attention at each step improves the output. Between those steps, you define validation gates: checks that verify the output before passing it forward. The defining feature: you can write every step down before the system runs. Your code owns the control flow.

And the LLM handles the details.

Agent patterns are for when the number and type of steps are unknown until the system runs.

The model needs to look at results, decide what to do next, and choose its own path. For example, say you built a support agent that handles order cancellations. A customer asks to cancel, but the agent doesn’t know upfront whether it needs to check the refund policy, look up the shipping status, or pass the issue to a human.

The steps depend on what it finds…

Here’s how you know it’s time to switch: you write more code handling errors and exceptions than doing the actual work. Or you keep adding special cases for situations the LLM encounters you didn’t plan for.

If you can still write down all the steps before the system runs, stick with a workflow.

Agent patterns sit within multi-agent architectures, i.e., many agents cooperate on the same problem, each with its own role.

One common mistake: you get your system to 70-80% of a prototype and assume the architecture needs upgrading. It usually doesn’t…The real issue is usually prompt quality or missing validation gates. Move to a more complex pattern only after the simpler one has actually failed, not when it feels limiting.

With that baseline set, here are the four workflow patterns:

Workflow patterns: you control the flow

With workflow patterns, your code controls what happens.

You decide the steps, the order, and the checks between them. The LLM handles each step, but your code is in charge…

1. Prompt chaining

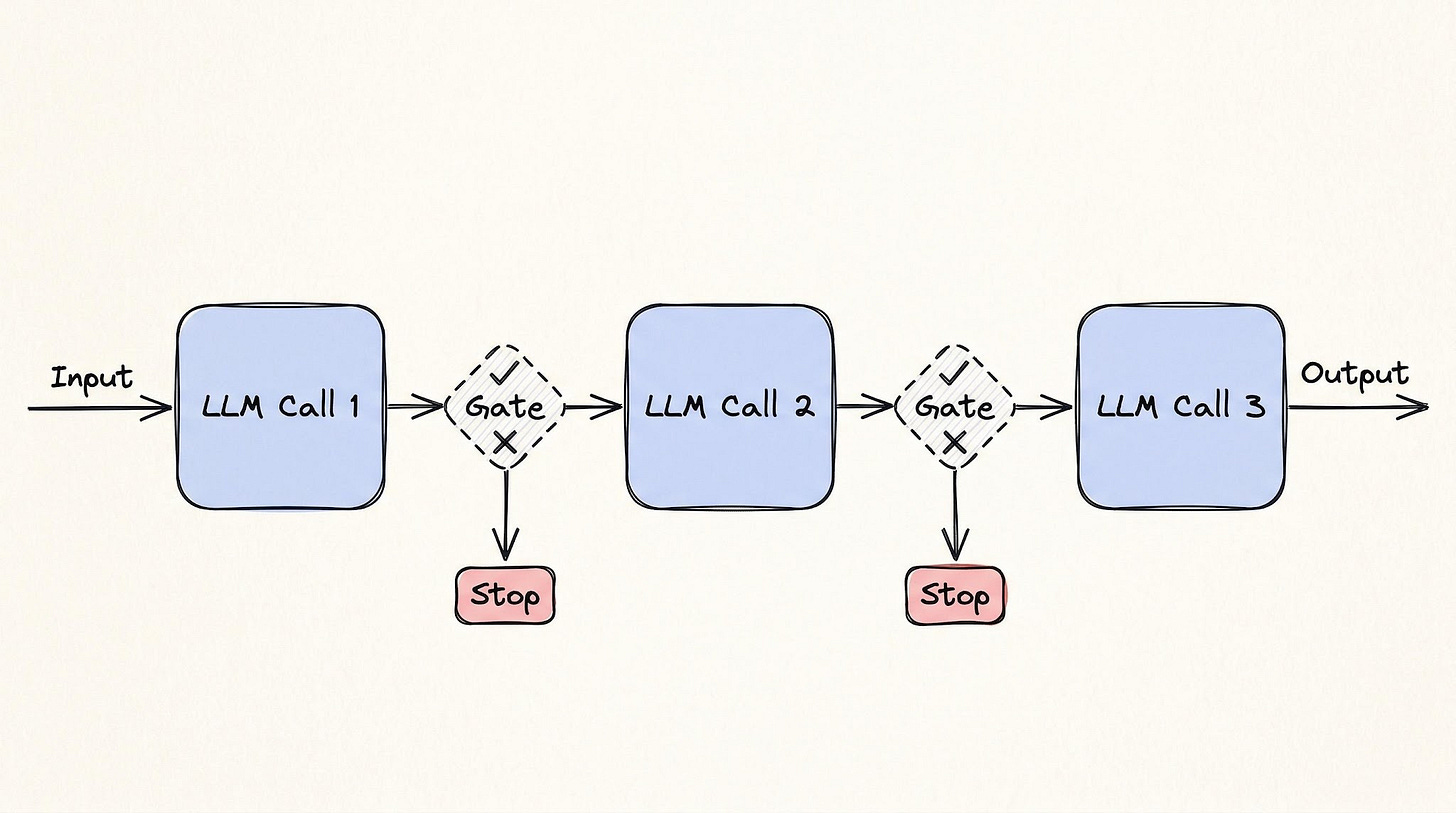

Prompt chaining breaks a task into a series of LLM calls.

Each call takes the output from the previous step, and a check runs between them to ensure the result is correct before moving on.

If you’ve ever set up a CI/CD (Continuous Integration/Continuous Deployment) pipeline, this is the same idea. A build pipeline compiles, lints, tests, and packages. Each step must pass before the next one runs.

If tests fail, the pipeline stops.

For example, in legal contract review, this looks like: extract all clauses from a contract, classify each clause by risk level, then generate a summary of high-risk items for human review. Products like Thomson Reuters CoCounsel and Robin AI run variations of this pipeline.

Each step gets a focused prompt with a narrow task, and a validation gate checks the output before passing it forward.

Tradeoffs

Latency grows linearly…

Five steps mean five round-trip. Errors carry forward: if step two misclassifies a clause, steps three through five process bad input. The gates between steps catch these failures early, but they only catch what the conditions you write can catch.

2. Routing

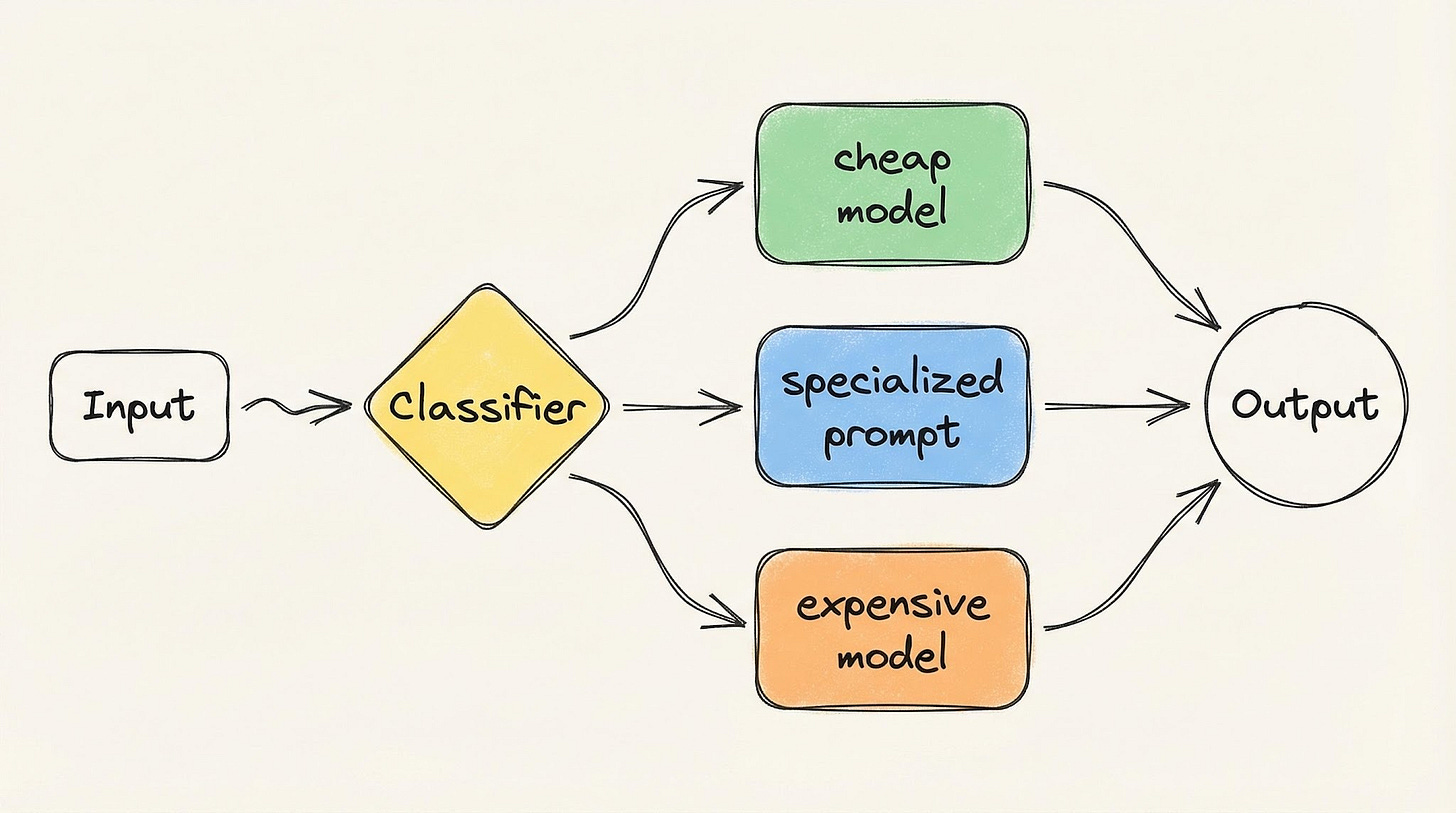

A routing pattern classifies the input and sends it to the right handler: a different prompt, a different model, or a different sub-workflow.

Each handler is built for one type of input.

This is like a hospital front desk. A patient walks in, the front desk checks their symptoms, and then sends them to the right doctor. The front desk doesn’t treat anyone. And the doctor doesn’t check in patients.

Sierra AI routes across 15+ models this way.

Classification tasks go to one model, tool calling to another, and response generation to a third. Customer support platforms like Intercom Fin route each customer request based on what the customer wants and how they feel about it, sending requests to either an AI handler or a human agent.

The cost argument is built into the pattern…

Simple queries hit cheap, fast models. While complex ones hit expensive, capable ones. You’re not paying the highest prices for work that a smaller model handles well.

Tradeoffs

In 1 word: misclassification.

A complex query routed to the cheaper model produces a poor answer. A simple query routed to the expensive model is just a waste of money.

Either way, the router is a single point of failure, and its accuracy sets the upper limit for the entire system.

3. Parallelization

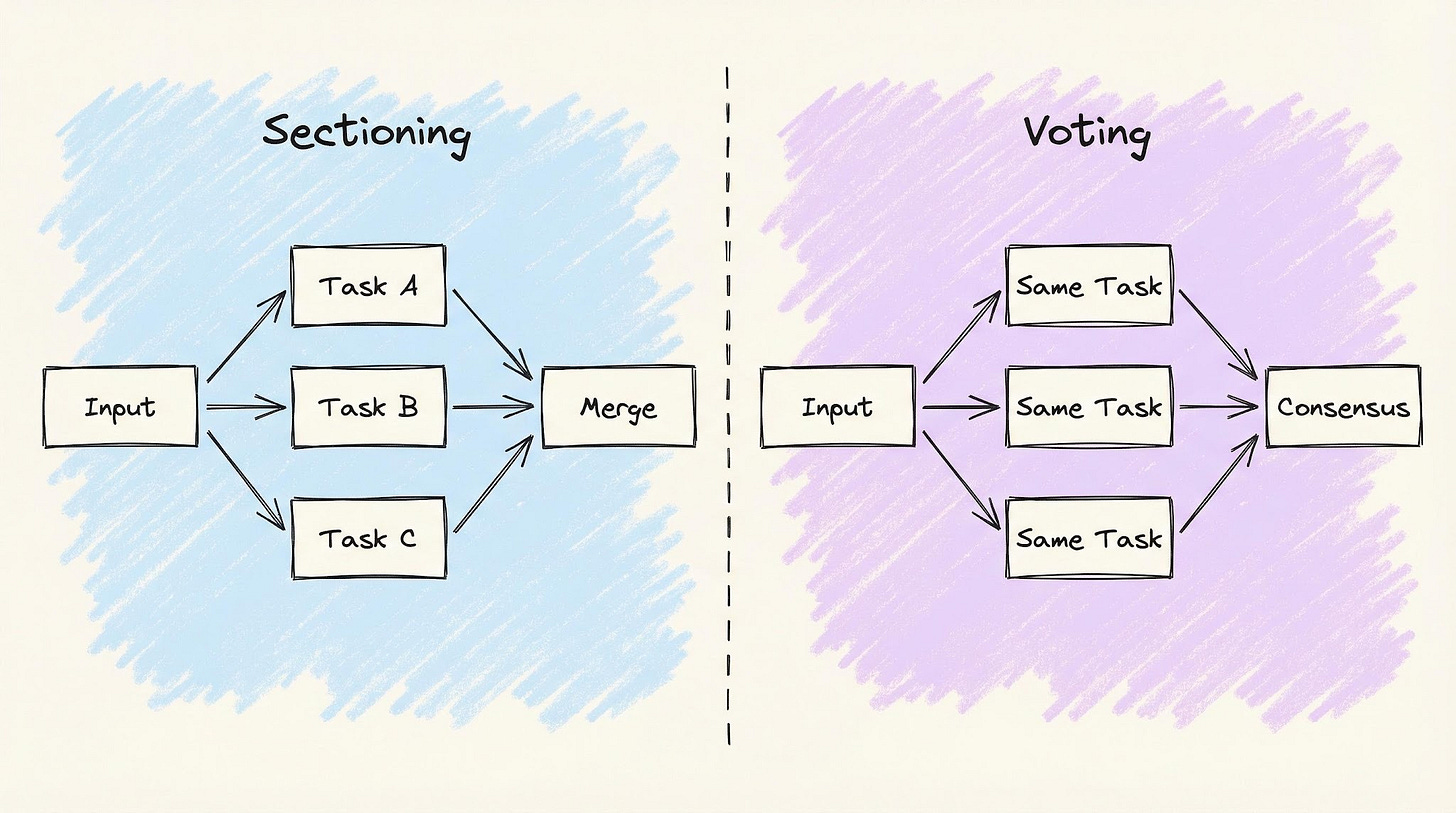

In software, parallelization means running many tasks simultaneously rather than one after another. With LLMs, it has two variants that solve different problems…

Sectioning splits a task into independent parts, runs them in parallel, and merges the results. This is like a construction crew. Electricians, plumbers, and carpenters work in the same building at the same time. Their work gets combined at the end.

GitHub Advanced Security works this way: CodeQL and third-party tools like Snyk and Semgrep scan the same pull request in parallel, each producing independent security alerts.

Voting runs the same task many times with different prompts and combines the answers. This is like a jury. Each juror looks at the same evidence and gives their own opinion, and the group decides together.

You’d build this for something like a security review: three separate prompts analyze the same code change for vulnerabilities, and you flag an issue only if two or more agree. Running the task many times is more expensive, but it catches fewer false alarms.

In short, sectioning gives each worker a different job; voting gives every worker the same job.

Tradeoffs

Cost multiplies with every parallel branch.

Partial failures are a design decision you need to plan for up front: if one branch fails, do you retry it, proceed with the others, or fail the whole operation? There’s no universal answer, and a wrong choice is expensive.

4. Orchestrator-workers

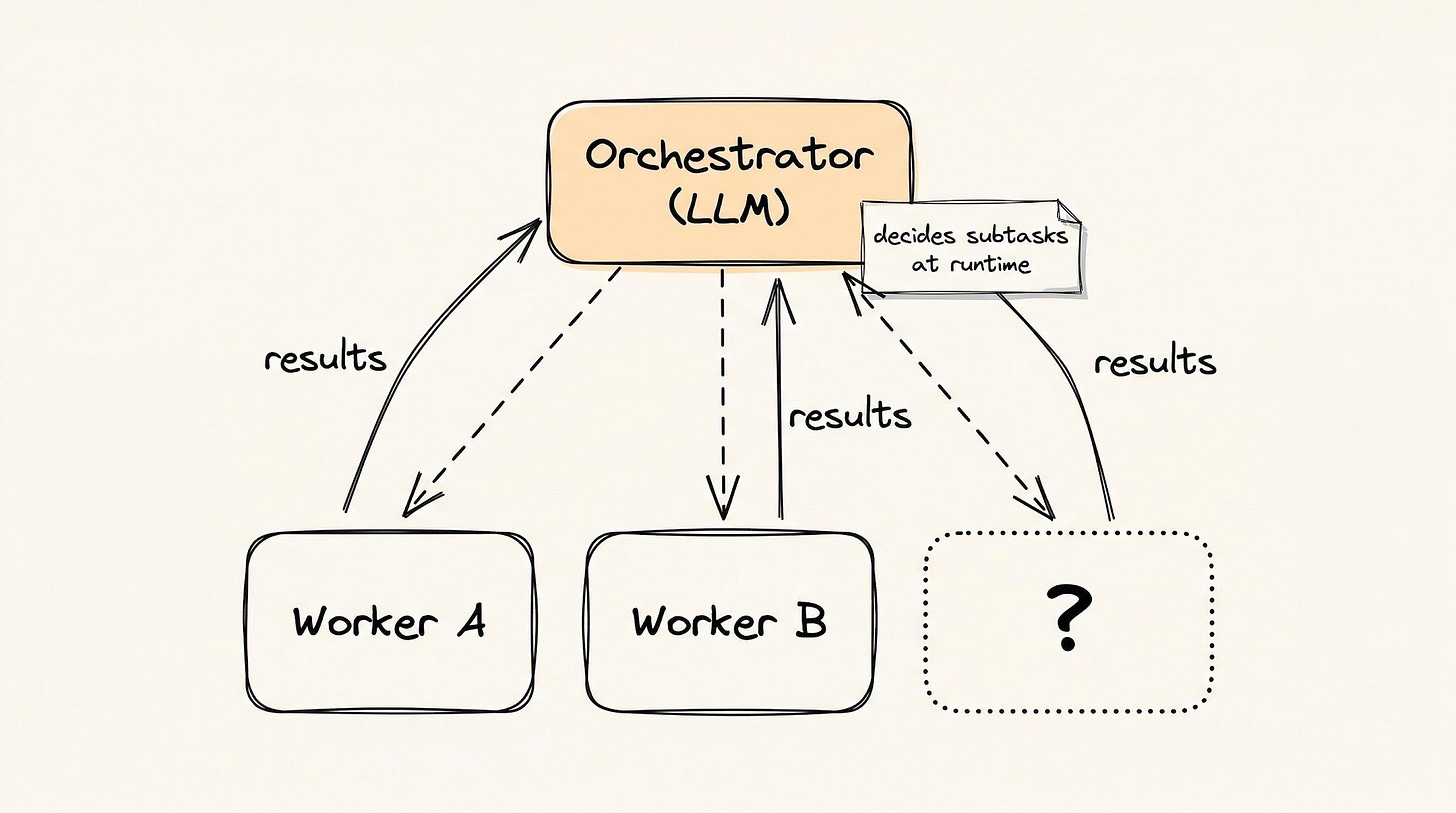

The orchestrator-workers pattern uses one central LLM to break a task into subtasks, assign each subtask to a worker LLM, and combine the results.

The difference from the earlier patterns is that the subtasks aren’t known in advance. Orchestrator figures them out as it goes. This is like a general contractor building a house:

They hire the right people, but don’t know every subcontractor they’ll need upfront

Plumber finds a structural problem, so the contractor brings in a structural engineer

The work plan changes as the project reveals new problems

Cursor’s agent mode works this way. It starts with different agents for different parts of the codebase:

One adding tests

Another updating documentation

A third refactoring shared utilities

You’re trusting the LLM to figure out what work needs to be done, not just to do the work you’ve assigned.

This uses several LLMs, but it’s NOT a multi-agent architecture: a single central LLM remains in control of the entire process. In multi-agent systems, agents can call each other directly without a central coordinator, and control can transfer between them.

Tradeoffs

The orchestrator can lose track of the original goal.

It breaks simple tasks into too many subtasks. And when workers finish faster than it can combine results, the orchestrator itself becomes the bottleneck.

These four patterns get gradually more powerful and more expensive…

Chaining and routing are cheap and predictable: you know what will happen before it runs. Parallelization saves time but costs more. Orchestrator-workers handle problems you can’t predict upfront, but it introduces failure modes you can’t predict either. The first three patterns are simple to understand.

Most production systems shouldn’t need to go further than parallelization. When they do, the next four patterns hand control to the model itself...

Reminder: this is a teaser of the subscriber-only newsletter, exclusive to my golden members.

When you upgrade, you’ll get:

High-level architecture of real-world systems.

Deep dive into how popular real-world systems work.

How real-world systems handle scale, reliability, and performance.

Agent patterns: LLM controls the flow

With agent patterns, you STOP defining what happens and in what order.

Instead of defining steps, you define constraints: what tools are available, how much the model can spend, and when to stop. The model observes, reasons, and chooses what happens next.

That trust decision appears differently in each pattern: how much autonomy, over what actions, with what limits.

5. Reflection

Reflection is when the LLM generates output, reviews its own work, and then fixes the problems it found.

Keep reading with a 7-day free trial

Subscribe to The System Design Newsletter to keep reading this post and get 7 days of free access to the full post archives.